Google is fully in our Gemini era.

Before we get into it, I want to reflect on this moment we’re in. We’ve been investing in AI for more than a decade — and innovating at every layer of the stack: research, product, infrastructure, and we’re going to talk about it all today.

Still, we are in the early days of the AI platform shift. We see so much opportunity ahead—for creators, for developers, for startups, for everyone. Helping to drive those opportunities is what our Gemini era is all about. So let’s get started.

The Gemini era

A year ago on the I/O stage we first shared our plans for Gemini: a frontier model built to be natively multimodal from the beginning, that could reason across text, images, video, code, and more. It marks a big step in turning any input into any output — an “I/O” for a new generation.

Since then, we introduced the first Gemini models, our most capable yet. They demonstrated state-of-the-art performance on every multimodal benchmark. Two months later, we introduced Gemini 1.5 Pro, delivering a big breakthrough in long context. It can run 1 million tokens in production, consistently, more than any other large-scale foundation model yet.

We want everyone to benefit from what Gemini can do. So we’ve worked quickly to share these advances with all of you. Today more than 1.5 million developers use Gemini models across our tools. You’re using it to debug code, get new insights, and build the next generation of AI applications.

We’ve also been bringing Gemini’s breakthrough capabilities to our products in powerful ways. We’ll show examples today across Search, Photos, Workspace, Android and more.

Product progress

Today, all of our 2-billion user products use Gemini.

And we’ve introduced new experiences too, including on mobile, where people can interact with Gemini directly through the app, now available on Android and iOS. And through Gemini Advanced which provides access to our most capable models. Over 1 million people have signed up to try it in just three months, and it continues to show strong momentum.

Expanding AI Overviews in Search

One of the most exciting transformations with Gemini has been in Google Search.

In the past year, we’ve answered billions of queries as part of our Search Generative Experience. People are using it to search in entirely new ways, asking new types of questions, making longer and more complex queries, even searching with photos, and getting back the best the web has to offer.

We’ve been testing this experience outside of Labs. And we’re encouraged to see not only an increase in search usage but also an increase in user satisfaction.

I’m excited to announce that we’ll begin launching this fully-revamped experience, AI Overviews, to everyone in the U.S. this week. And we’ll bring it to more countries soon.

There’s so much innovation happening in Search. Thanks to Gemini we can create much more powerful search experiences, including within our products.

Introducing Ask Photos

One example is Google Photos, which we launched almost nine years ago. Since then, people have used it to organize their most important memories. Today that amounts to more than 6 billion photos and videos uploaded every single day.

And people love using Photos to search across their life. With Gemini we’re making that a whole lot easier.

Say you’re paying at the parking station, but you can’t recall your license plate number. Before, you could search Photos for keywords and then scroll through years’ worth of photos, looking for license plates. Now, you can simply ask Photos. It knows the cars that appear often, it triangulates which one is yours, and tells you the license plate number.

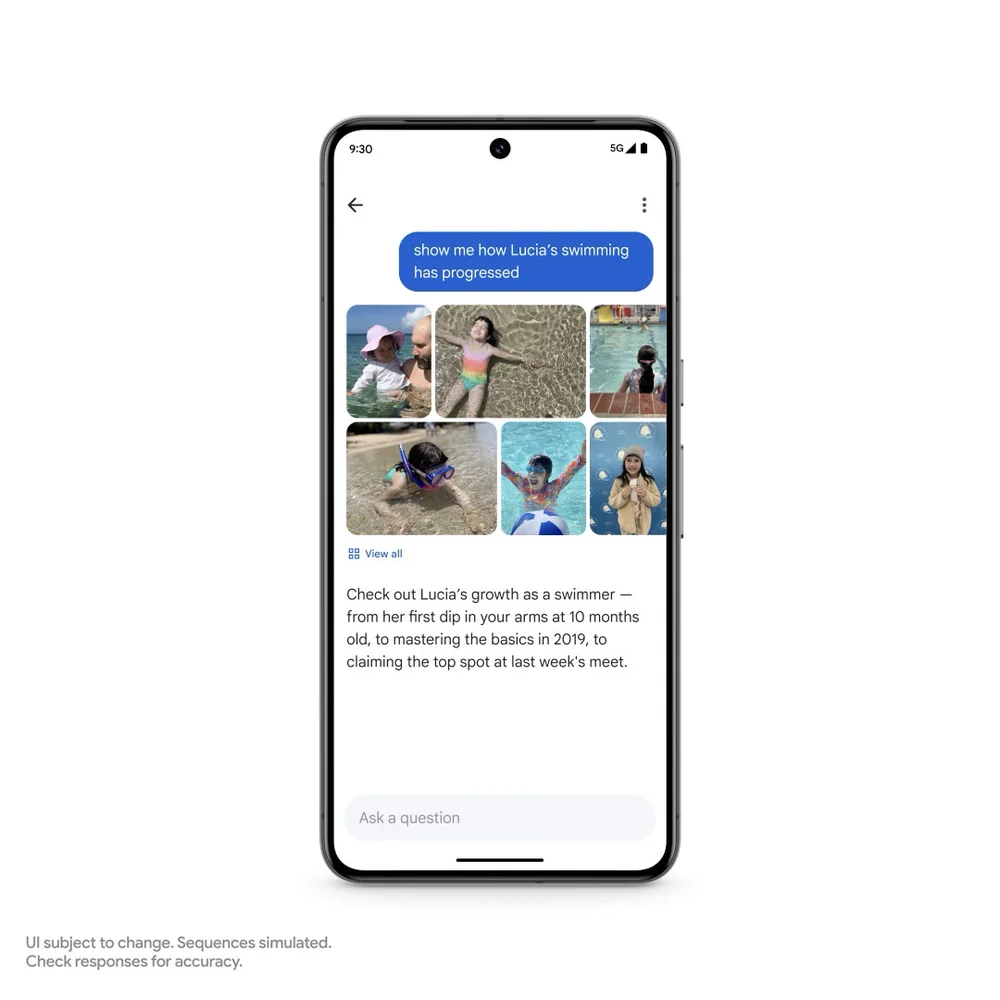

And Ask Photos can help you search your memories in a deeper way. For example, you might be reminiscing about your daughter Lucia’s early milestones. Now, you can ask Photos: “When did Lucia learn to swim?”

And you can follow up with something even more complex: “Show me how Lucia’s swimming has progressed.”

Here, Gemini goes beyond a simple search, recognizing different contexts—ffrom doing laps in the pool to snorkeling in the ocean to the text and dates on her swimming certificates. And Photos packages it all up together in a summary, so you can really take it all in and relive amazing memories all over again. We’re rolling out Ask Photos this summer, with more capabilities to come.

Unlocking more knowledge with multimodality and long context

Unlocking knowledge across formats is why we built Gemini to be multimodal from the ground up. It’s one model, with all the modalities built in. So not only does it understand each type of input — and finds connections between them.

Multimodality radically expands the questions we can ask, and the answers we’ll get back. Long context takes this a step further, enabling us to bring in even more information: hundreds of pages of text, hours of audio or an hour of video, entire code repos…or, if you want, roughly 96 Cheesecake Factory menus.

For that many menus, you’d need a one million token context window, now possible with Gemini 1.5 Pro. Developers have been using it in super interesting ways.

https://www.youtube-nocookie.com/embed/cogrixfRvWw?enablejsapi=1&origin=https%3A%2F%2Fblog.google&widgetid=2

We’ve been rolling out Gemini 1.5 Pro with long context in preview over the last few months. We’ve made a series of quality improvements across translation, coding and reasoning. You’ll see these updates reflected in the model starting today.

Now I’m excited to announce that we’re bringing this improved version of Gemini 1.5 Pro to all developers globally. In addition, Gemini 1.5 Pro with 1 million contexts is now directly available for consumers in Gemini Advanced. This can be used across 35 languages.

Expanding to 2 million tokens in private preview

One million tokens is opening up entirely new possibilities. It’s exciting, but I think we can push ourselves even further.

So today, we’re expanding the context window to 2 million tokens, and making it available for developers in private preview.

It’s amazing to look back and see just how much progress we’ve made in a few months. And this represents the next step on our journey towards the ultimate goal of infinite context.

Bringing Gemini 1.5 Pro to Workspace

So far, we’ve talked about two technical advances: multimodality and long context. Each is powerful on its own. But together, they unlock deeper capabilities, and more intelligence.

This comes to life with Google Workspace.

People are always searching their emails in Gmail. We’re working to make it much more powerful with Gemini. So for example, as a parent, you want to stay informed about everything that’s going on with your child’s school. Gemini can help you keep up.

Now we can ask Gemini to summarize all recent emails from the school. In the background, it’s identifying relevant emails, and even analyzing attachments, like PDFs. You get a summary of the key points and action items. Maybe you were traveling this week and couldn’t make the PTA meeting. The recording of the meeting is an hour long. If it’s from Google Meet, you can ask Gemini to give you the highlights. There’s a parents group looking for volunteers, and you’re free that day. So of course, Gemini can draft a reply.

There are countless other examples of how this can make life easier. Gemini 1.5 Pro is available today in Workspace Labs. Aparna shares more.

Audio outputs in NotebookLM

We just looked at an example with text outputs. But with a multimodal model, we can do so much more.

We’re making progress here, with more to come. Audio overviews in NotebookLM show the progress. It uses Gemini 1.5 Pro to take your source materials and generate a personalized and interactive audio conversation.

This is the opportunity with multimodality. Soon you’ll be able to mix and match inputs and outputs. This is what we mean when we say it’s an I/O for a new generation. But what if we could go even further?

Going further with AI agents

Taking this even further is one of the opportunities we see with AI Agents. I think about them as intelligent systems that show reasoning, planning, and memory. They are able to “think” multiple steps ahead, and work across software and systems, all to get something done on your behalf, and most importantly, under your supervision.

We are still in the early days, but let me show you the kinds of use cases we’re working hard to solve.

Let’s start with shopping. It’s pretty fun to shop for shoes, and a lot less fun to return them when they don’t fit.

Imagine if Gemini could do all the steps for you:

Searching your inbox for the receipt …

Locating the order number from your email…

Filling out a return form…

Even scheduling a UPS pickup.

That’s much easier, right?

Let’s take another example that’s a bit more complex.

Say you just moved to Chicago. You can imagine Gemini and Chrome working together to help you do a number of things to get ready — organizing, reasoning, synthesizing on your behalf.

For example, you’ll want to explore the city and find services nearby — from dry cleaners to dog walkers. And you’ll have to update your new address across dozens of websites.

Gemini can work across these tasks and will prompt you for more information when needed — so you are always in control.

That part is really important — as we prototype these experiences we’re thinking hard about how to do it in a way that’s private, secure and works for everyone.

These are simple use cases but they give you a good sense of the types of problems we want to solve, by building intelligent systems that think ahead, reason, and plan — all on your behalf.

What it means for our mission

The power of Gemini — with multimodality, long context and agents — brings us closer to our ultimate goal: making AI helpful for everyone.

We see this as how we’ll make the most progress against our mission: Organizing the world’s information across every input, making it accessible via any output, and combining the world’s information, with the information in YOUR world, in a way that’s truly useful for you.

Breaking new ground

To realize the full potential of AI, we’ll need to break new ground. The Google DeepMind team has been hard at work on this.

We’ve seen so much excitement around 1.5 Pro and its long context window. But we also heard from developers that they wanted something faster and more cost effective. So tomorrow, we’re introducing Gemini 1.5 Flash, a lighter-weight model built for scale. It’s optimized for tasks where low latency and cost matter most. 1.5 Flash will be available in AI Studio and Vertex AI on Tuesday.

Looking further ahead, we’ve always wanted to build a universal agent that will be useful in everyday life. Project Astra, shows multimodal understanding and real-time conversational capabilities.

https://www.youtube-nocookie.com/embed/nXVvvRhiGjI?enablejsapi=1&origin=https%3A%2F%2Fblog.google&widgetid=3

We’ve also made progress on video and image generation with Veo and Imagen 3, and introduced Gemma 2.0, our next generation of open models for responsible AI innovation. Read more from Demis Hassabis.

Infrastructure for the AI era: Introducing Trillium

Training state-of-the-art models requires a lot of computing power. Industry demand for ML compute has grown by a factor of 1 million in the last six years. And every year, it increases tenfold.

Google was built for this. For 25 years, we’ve invested in world-class technical infrastructure. From the cutting-edge hardware that powers Search, to our custom tensor processing units that power our AI advances.

Gemini was trained and served entirely on our fourth and fifth generation TPUs. And other leading AI companies, including Anthropic, have trained their models on TPUs as well.

Today, we’re excited to announce our 6th generation of TPUs, called Trillium. Trillium is our most performant and most efficient TPU to date, delivering a 4.7x improvement in compute performance per chip over the previous generation, TPU v5e.

We’ll make Trillium available to our Cloud customers in late 2024.

Alongside our TPUs, we’re proud to offer CPUs and GPUs to support any workload. That includes the new Axion processors we announced last month, our first custom Arm-based CPU that delivers industry-leading performance and energy efficiency.

We’re also proud to be one of the first Cloud providers to offer Nvidia’s cutting-edge Blackwell GPUs, available in early 2025. We’re fortunate to have a longstanding partnership with NVIDIA, and are excited to bring Blackwell’s breakthrough capabilities to our customers.

Chips are a foundational part of our integrated end-to-end system. From performance-optimized hardware and open software to flexible consumption models. This all comes together in our AI Hypercomputer, a groundbreaking supercomputer architecture.

Businesses and developers are using it to tackle more complex challenges, with more than twice the efficiency relative to just buying the raw hardware and chips. Our AI Hypercomputer advancements are made possible in part because of our approach to liquid cooling in our data centers.

We’ve been doing this for nearly a decade, long before it became state-of-the-art for the industry. And today, our total deployed fleet capacity for liquid cooling systems is nearly 1 gigawatt and growing—tthat’s close to 70 times the capacity of any other fleet.

Underlying this is the sheer scale of our network, which connects our infrastructure globally. Our network spans more than 2 million miles of terrestrial and subsea fiber: over 10 times (!) the reach of the next leading cloud provider.

We will keep making the investments necessary to advance AI innovation and deliver state-of-the-art capabilities.

The most exciting chapter of Search yet

One of our greatest areas of investment and innovation is in our founding product, Search. 25 years ago we created Search to help people make sense of the waves of information moving online.

With each platform shift, we’ve delivered breakthroughs to help answer your questions better. On mobile, we unlocked new types of questions and answers — using better context, location awareness, and real-time information. With advances in natural language understanding and computer vision, we enabled new ways to search, with a voice, or a hum to find your new favorite song; or with an image of that flower you saw on your walk. And now you can even Circle to Search those cool new shoes you might want to buy. Go for it, you can always return them!

Of course, Search in the Gemini Era will take this to a whole new level, combining our infrastructure strengths, the latest AI capabilities, our high bar for information quality, and our decades of experience connecting you to the richness of the web. The result is a product that does the work for you.

Google Search is generative AI at the scale of human curiosity. And it’s our most exciting chapter of Search yet. Read more about the Gemini era of Search from Liz Reid.

More intelligent Gemini experiences

Gemini is more than a chatbot; it’s designed to be your personal, helpful assistant that can help you tackle complex tasks and take actions on your behalf.

Interacting with Gemini should feel conversational and intuitive. So we’re announcing a new Gemini experience that brings us closer to that vision called Live, which allows you to have an in-depth conversation with Gemini using your voice. We’ll also be bringing 2 million tokens to Gemini Advanced later this year, making it possible to upload and analyze super-dense files like video and long code. Sissie Hsiao shares more.

Gemini on Android

With billions of Android users worldwide, we’re excited to integrate Gemini more deeply into the user experience. As your new AI assistant, Gemini is here to help you anytime, anywhere. And we’ve incorporated Gemini models into Android, including our latest on-device model: Gemini Nano with Multimodality, which processes text, images, audio, and speech to unlock new experiences while keeping information private on your device. Sameer Samat shares the Android news here.

Our responsible approach to AI

We continue to approach the opportunity AI boldly, with a sense of excitement. We’re also making sure we do it responsibly. We’re developing a cutting-edge technique we call AI-assisted red teaming, that draws on Google DeepMind’s gaming breakthroughs like AlphaGo, to improve our models. Plus, we’ve expanded SynthID, our watermarking tool that makes AI-generated content easier to identify, to two new modalities: text and video. James Manyika shares more.

Creating the future together

All of this shows the important progress as we take a bold and responsible approach to making AI helpful for everyone.

We’ve been AI-first in our approach for a long time. Our decades of research leadership have pioneered many of the modern breakthroughs that power AI progress, for us and for the industry. On top of that we have:

- World-leading infrastructure built for the AI era

- Cutting-edge innovation in Search, now powered by Gemini

- Products that help at extraordinary scale — including 15 products with half a billion users

- And platforms that enable everyone — partners, customers, creators, and all of you — to invent the future.

This progress is only possible because of our incredible developer community. You are making it real, through the experiences and applications you build every day. So, to everyone here in Shoreline and the millions more watching around the world, here’s to the possibilities ahead and creating them together.